Extend The Lifespan of Your Raspberry Pi's SD Card with log2ram

Log2ram is a software that redirects logs to the memory instead of the SD Card. Here's how I used it to extend my SD Card's lifespan in my Raspberry Pi.

In my previous post, I talked about how you can use zram to squeeze more memory out of your Raspberry Pi at no cost. In this, I will talk about how you can then use that additional compressed memory to extend the life of the SD card on your Raspberry Pi.

The brilliant design of using SD cards

Since the advent of the Raspberry Pi, almost all single-board computers (SBCs) on the market have followed their lead in using SD cards as the main storage medium for the OS.

The main benefits of doing so, as I see it, are:

- The convenience of not needing to disconnect and move the device to your computer to be reset or re-flashed in the event of some catastrophic failure of the OS

- The low price-point of SD cards enabling cheap replacement in the event of storage failure

- Faster iterative learning and feature-set switches, where the device can play a completely different role just by swapping the SD card which entails switching the OS.

For example: Media-center ➡️ Desktop replacement ➡️ IoT hub

When that brilliance becomes a problem

While the use of SD cards are great for learning and small personal projects, SBCs in the current day and age have since outgrown their original use cases as a result of the specifications arms race between the Raspberry Pi Foundation and all other SBC manufacturers.

For example, as of the time of writing, we're seeing the Raspberry Pi 4 Model B with an option of 8GB RAM, which is way too much for a simple IoT controller. Therefore it's unsurprising that the SBC market is slowly but surely, outgrowing their original use cases.

What I use SBCs for

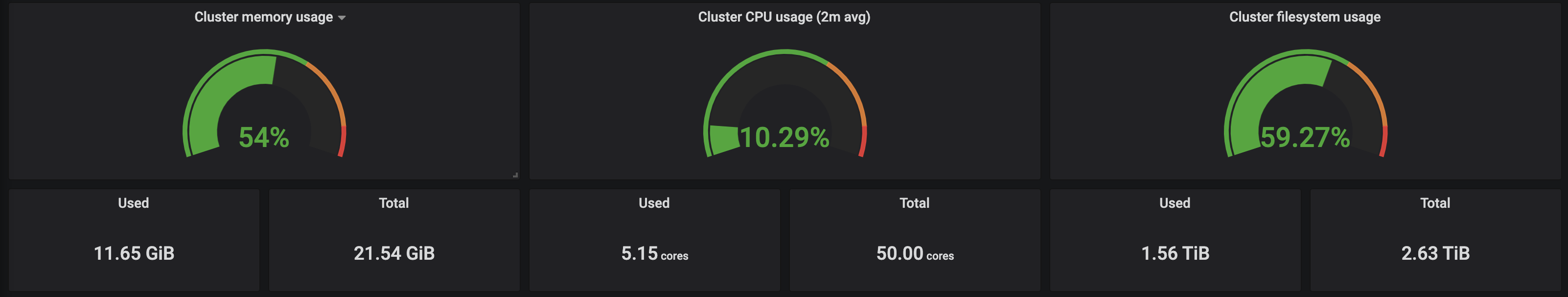

I currently run self-hosted web services for my family and close friends (up to 20 users) on 11 SBCs, totalling up to 50 cores, 23GB of RAM spread over 2 clusters, Kraken and Leviathan.

This is a rather extreme example, but if your use case even remotely touches running a server 24/7, then you are bound to run into SD card reliability issues at some point during its lifespan.

The problem with SD cards

SD cards are in essence, identical to flash drives and SSDs. All 3 storage solutions employ what we know as NAND-based flash memory to persist data without power.

The main difference between SD cards/flash drives and the more expensive SSDs lie in the underlying technology of the NAND flash used. SD cards and flash drives alike typically use MLC (Multi-level cell) technology where multiple (up to 5) bits of information are stored in a single storage element, whereas SSDs typically use SLC (Single-level cell) technology where only 1 bit of information is stored in a single storage element. An analogy for this is house rentals.

Apartment rental analogy

Assume that you own a building of 100 apartments. This building represents your storage device, apartments represent storage elements on the device and tenants represent your data.

SLC scenario

If you set a maximum occupancy per apartment to just 1 person, then this would result in a relatively low density of tenants, with a high standard of living, and consequently, high cost per head as the building may only house 100 tenants. Tenants can also be located quickly given that 1 apartment always corresponds to 1 tenant. This situation reflects the typical NAND technology in SSDs, basically premium, fast and reliable storage.

MLC scenario

If you raise the maximum occupancy per apartment to 5, then this would result in a 5-fold increase in the density of tenants. Even though this brings down the cost-per-head to a fifth of the earlier scenario, allowing your building to house 500 tenants, high-density apartments tend to have a lower standard of living. Tenants are also more tedious to locate, given that each apartment may house multiple tenants.

On top of that, high-density apartments also leads to an increased crime rate as evidenced in Hong Kong's Kowloon Walled City, the world's most densely populated settlement before its demolishment in 1994. Crime rate here can be seen as analogous to data corruption rate. This situation reflects the typical NAND technology in flash drives, where storage is cheap, but slow with a higher chance of data corruption.

What this all means

SD Cards will experience the effects of wear and tear earlier than in SSDs and other storage media due to their storage technology which entails a shorter lifespan, especially if your usage is write-heavy. Unfortunately, practically everything out there has a write-heavy component with the exception of simple scheduled scripts, so you'd expect to replace the SD card on your Raspberry Pi, on average, every 12 months.

This, however, does not mean that your Raspberry Pis are doomed.

There is still hope.

Hello log2ram

In a typical server, most write operations are not from the assets themselves but rather, from logs. Logs from the linux kernel, the server daemon, its workers, as well as other software that you may have colocated on the same machine.

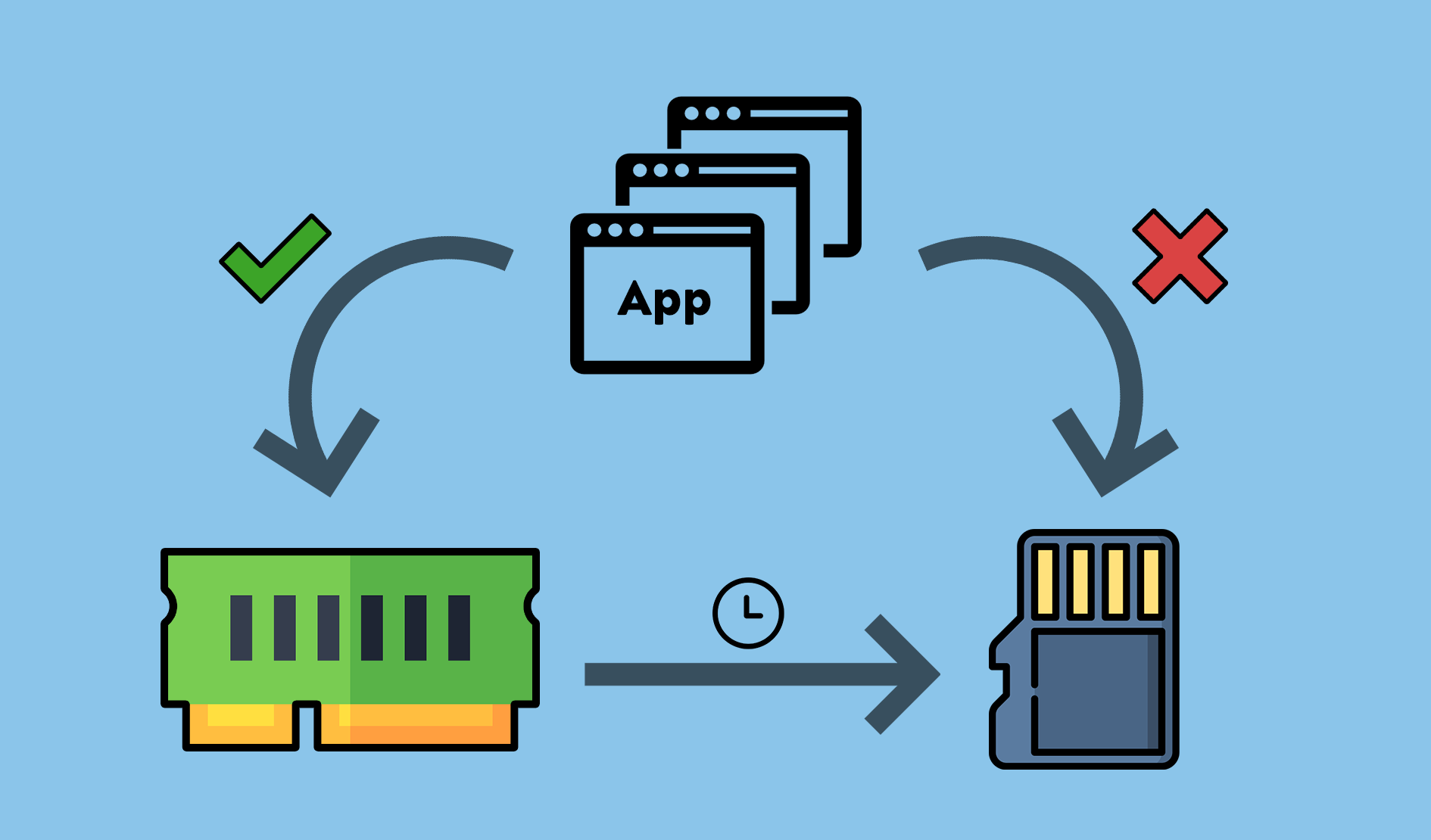

Log2ram is a linux software that redirects such writes to the memory (RAM) instead of the disk (SD Card). It does this by creating a virtual block device from space set aside in the memory (ramdisk), moving the existing /var/log directory to /var/log.hdd and subsequently, mounting the ramdisk onto /var/log where all logs usually write to. This way, logging to memory is transparent to all apps, as they still log to the same directory, just that the directory is now backed by the memory instead of the disk.

Persistence of logs

As RAM is transient and all data in it is lost on loss of power, these logs written to the memory must eventually be persisted to disk as well. Log2ram achieves log persistence by periodically syncing the /var/log to the disk at /var/log.hdd with a cron job which defaults to run daily.

Word of caution

There is a downside to using log2ram that you should be aware of before implementing it on your system. In the event that the system crashes or experiences a power outage, you may lose valuable logs of events occurring just before the crash.

This is due to the transience of RAM and the persistence mechanism required when using log2ram. The crash/outage may most likely occur before the cron job that persists logs to disk, has had an opportunity to run, resulting in the loss of logs between 1 second ago, and as far back as the set frequency of sync (last 1 day by default).

If logs are absolutely critical to your set up, I'd suggest either skipping log2ram entirely, or setting a very frequent sync interval (<1hr) though that severely limits the benefits of this exercise.

Installing log2ram

Now with the pleasantries out of the way, let's get our hands dirty. The installation steps are very similar to zram-swap-config, for a reason that I will reveal later in this piece.

$ sudo apt-get install git$ git clone https://github.com/azlux/log2ram

&& cd log2ram$ chmod +x install.sh && sudo ./install.sh$ cd .. && rm -r log2ramConfiguring log2ram

log2ram comes installed with sane defaults. But for the sake of the tinker-freaks out there, here's how you can tweak it.

Edit /etc/log2ram.conf and modify the following parameters to your liking.

| Parameter | Explanation |

|---|---|

| SIZE | The amount of space in the memory to reserve for logs. (Default: 40M). |

| USE_RSYNC | Set to true to use rsync -X instead of cp -u when persisting logs in memory to disk. (Default: false) |

Set to false to disable system mail when the ramdisk runs out of space and only log an error. (Default: true) |

|

| ZL2R | Use a zram drive instead of creating a ramdisk. (Default: false) |

By now you've probably already realized what I'm about to talk about next, but for the sake of those having a bad day, or are experiencing high brain latencies, here's what's even more impressive about log2ram:

Log2ram integrates with zram for space-efficient storage of logs (😱!!!1111oneone)

Bonus: Integration with zram

Before I get into it, for the uninitiated, I'll just take a minute to explain why this is a huge deal.

Why is this awesome?

Text data and compression always go hand in hand because compression works on the principle that text, especially English text, comprises of large numbers of permutations of characters forming words and sentences, from a relatively small set of characters (~128 if ASCII). From a machine's perspective, that is a LOT of repetition. By storing the characters and its positional information instead of the actual text itself, we can save a ton of space, and that is essentially, what compression does.

Logs are a great example of such compressible data. With advances in CPU instruction sets, compression has become a relatively cheap operation in modern processors, so compression not only saves space, but also at very little performance cost.

What I'm saying here is that we can store up to 5X more logs in the same amount of space in the memory reserved for log2ram. To put things into perspective, the default setting of 40M will allow for 200M worth of logs, a number you'll probably never hit on a Raspberry Pi.

Configuring log2ram for zram-swap-config

If you haven't installed zram-swap-config, do refer to my previous post first to get it set up before reading on. If you have already done so, edit /etc/log2ram.conf and modify the following parameters to suit your system.

| Parameter | Explanation |

|---|---|

| COMP_ALG | Compression algorithm to use. |

| LOG_DISK_SIZE | Estimated uncompressed disk size. This value can be calculated with SIZE * Expected compression ratio. |

Expected compression ratios for LOG_DISK_SIZE configuration (Source):

| Compressor | Ratio | Compression | Decompression |

|---|---|---|---|

| zstd 1.3.4 -1 | 2.877 | 470 MB/s | 1380 MB/s |

| zlib 1.2.11 -1 | 2.743 | 110 MB/s | 400 MB/s |

| brotli 1.0.2 -0 | 2.701 | 410 MB/s | 430 MB/s |

| quicklz 1.5.0 -1 | 2.238 | 550 MB/s | 710 MB/s |

| lzo1x 2.09 -1 | 2.108 | 650 MB/s | 830 MB/s |

| lz4 1.8.1 | 2.101 | 750 MB/s | 3700 MB/s |

| snappy 1.1.4 | 2.091 | 530 MB/s | 1800 MB/s |

| lzf 3.6 -1 | 2.077 | 400 MB/s | 860 MB/s |

Once you are done configuring log2ram, reboot your Raspberry Pi and you're all set.

My configuration for Raspbian on Raspberry Pi 3B

SIZE=100M

USE_RSYNC=false

MAIL=true

PATH_DISK="/var/log"

ZL2R=true

COMP_ALG=lz4

LOG_DISK_SIZE=400MSIZEwas set to 100M as I'm running Kubernetes and I tend to have on average 10 pods per node. The ramdisk tends to run out of space at lower values.COMP_ALGwas set to the same compression algorithm used in zram-swap-config, which itself, was limited by system availability to lz4.LOG_DISK_SIZEwas set to 400M, with an estimated real-world compression ratio of 4.00. This is significantly higher than the theoretical ratios above as the they are obtained from compressing mixed data, while in this case we're only compressing text, which happens to be very compressible.

And just like that, you now no longer have to worry about the shortened lifespans of those pesky SD Cards. Enjoy your newfound confidence in your Raspberry Pi!